- Blog

- A1 website scraper

- The secret society of super villains comic book

- Bankivity vs moneyspire

- Id and superego

- Pso2 gamma control

- House of gord com

- Waterfox search engine

- Download internet explorer 8 for windows 7 64 bits

- Affinity photo review 2021

- Goodway cooling tower vacuum

- Thinking rock sync with onedrive

- Axure rp 9 crack

- Prey book series in order

- Karabiner elements update

- Blog

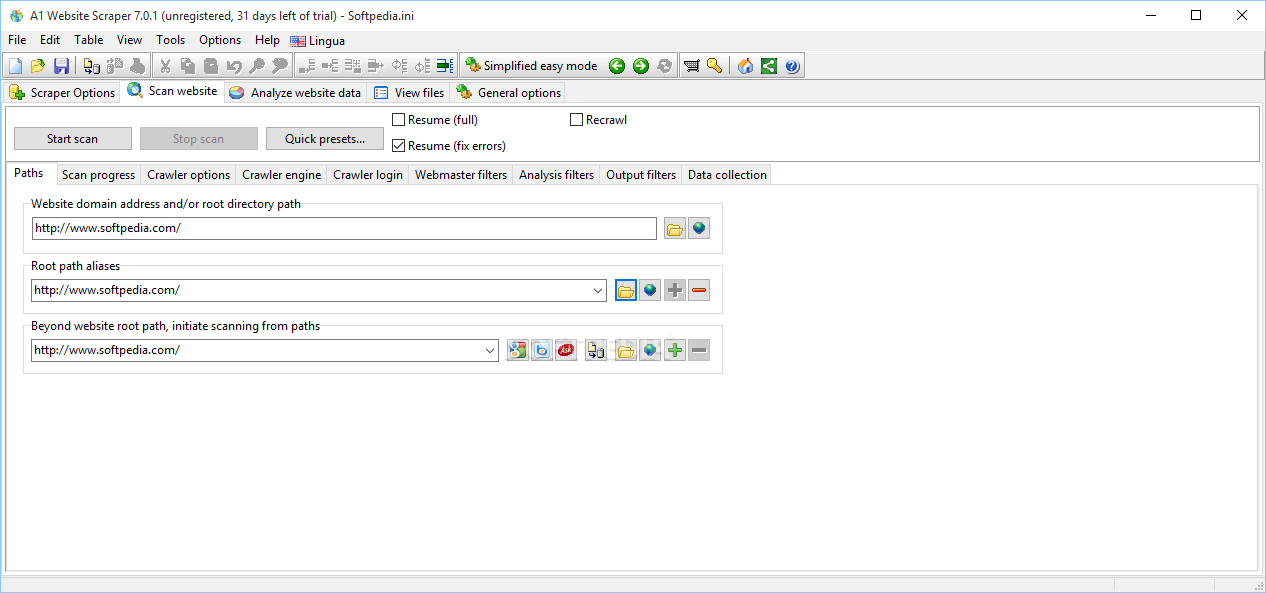

- A1 website scraper

- The secret society of super villains comic book

- Bankivity vs moneyspire

- Id and superego

- Pso2 gamma control

- House of gord com

- Waterfox search engine

- Download internet explorer 8 for windows 7 64 bits

- Affinity photo review 2021

- Goodway cooling tower vacuum

- Thinking rock sync with onedrive

- Axure rp 9 crack

- Prey book series in order

- Karabiner elements update

You don't need to add anything to the reference library to execute the above script. Url = base & Replace(nextPage, "about:", "") If InStr(oPage.className, "tab-link") And InStr(oPage.innerText, "next") > 0 Then Ws.Cells(R, 5) = elem.Children(4).innerTextįor Each oPage In. Ws.Cells(R, 4) = elem.Children(3).innerText Ws.Cells(R, 3) = elem.Children(2).innerText Ws.Cells(R, 2) = elem.Children(1).innerText R = R + 1: ws.Cells(R, 1) = elem.Children(0).innerText If InStr(elem.className, "table-dark-row-cp") > 0 Or InStr(elem.className, "table-light-row-cp") > 0 Then getElementById("screener-content").getElementsByTagName("tr") setRequestHeader "User-Agent", "Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/.121 Safari/537.36"įor Each elem In. Set Http = CreateObject("MSXML2.XMLHTTP") Try the following to get your aforesaid fields across all the pages from that site: Option Explicitĭim elem As Object, S$, R&, oPage As Object, nextPage$ĭim Http As Object, Html As Object, ws As Worksheet, Url$

Sheets("Data").Columns("A:B").ColumnWidth = 12 Semicolon:=False, Comma:=True, Space:=False, other:=True, OtherChar:=",", FieldInfo:=Array(1, 2)

TextQualifier:=xlDoubleQuote, ConsecutiveDelimiter:=False, Tab:=False, _ Sheets("Data").Range("a1").CurrentRegion.TextToColumns Destination:=Sheets("Data").Range("a1"), DataType:=xlDelimited, _ With Sheets("Data").QueryTables.Add(Connection:="URL " & str, Destination:=Sheets("Data").Range("a1")) Range(Selection, Selection.End(xlDown)).Select Range(Selection, Selection.End(xlToRight)).Select Sheets("Data").Activate 'Name of sheet the data will be downloaded into.

A1 website scraper code#

I'm new to VBA and below is my code which give me an output of whole websites pages (not what I want) and the code doesn't loop through different pages from 1-99. I'm not sure if I use VBA to scrape the data would be able to speed up the time taken for the data to refresh or not. I'm able to do this by using PowerQuery but seems like it takes around 3min to refresh the data if I'm using powerquery. There are 99 pages need to loop through and each pages have 20 tickers, which means I need to scrape almost 2000 rows of data. However this scraping tool is designed for using only Regex expressions, which can increase the parsing process time greatly.I want to scrape the data from the website above using VBA so that I can obtain 5 columns that I want (Ticker, EPS, EPS this Y, EPS next Y, Price). The A1 Scraper is good for mass gathering of URLs, text, etc., with multiple conditions set.

A1 website scraper full#

A1 Scraper’s full tutorial is available here. If you need to extract data from a complex website, just disable Easy mode: out press the button. Using the scraper as a website crawler also affords:Īdjustment of the speed of crawling according to service needs rather than server load. There is a need to mention that the set of regular expressions will be run against all the pages scraped. The result will be stored in one CSV file for all the given URLs. The scraper automatically finds and extracts the data according to Regex patterns. They must be set at the website analysis stage (mode).Įnter the Regex(es) into the Regex input area.ĭefine the name and path of the output CSV file. The defining of those filters can be set at the Analysis filters and Output filters subtabs respectively. Important: URLs that you scrape data from have to pass filters defined in both analysis filters and output filters. Press the ‘Start scan‘ button to cause the crawler to find text, links and other data on this website and cache them. Go to the ScanWebsite tab and enter the site’s URL into the Path subtab. This tool is can be compared with other web harvesting and web scraping services. The scraper works to extract text, URLs etc., using multiple Regexes and saving the output into a CSV file. The A1 scraper by Microsys is a program that is mainly used to scrape websites to extract data in large quantities for later use in webservices.